Which Process for Domain-Driven Design?

How to fit Domain-Driven Design in our development process? This a recurring question in many DDD-related discussions.

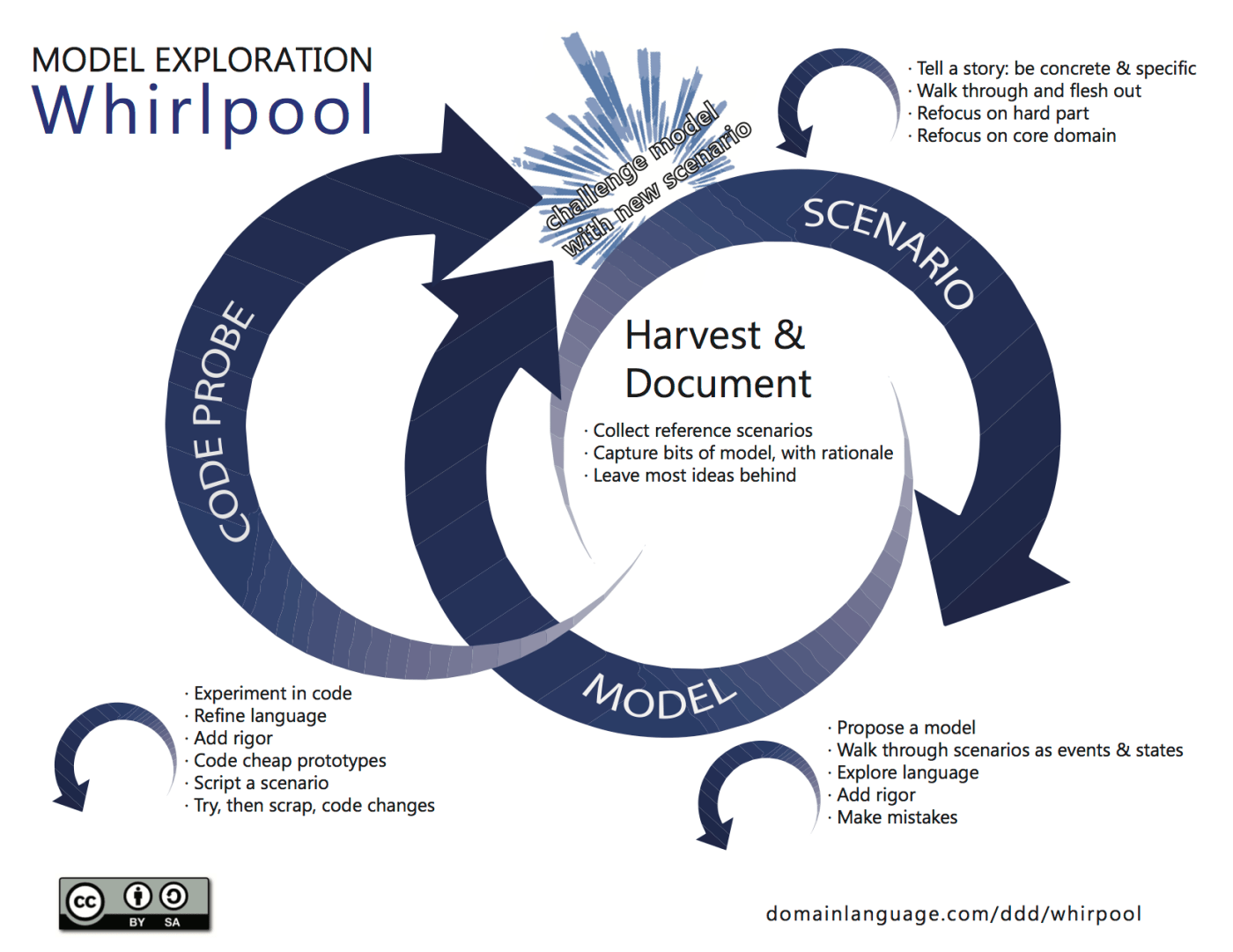

Eric Evans description of a process is what he calls The Whirlpool Model... which isn't a process!

This may sound disappointing if you're looking for a structured sequential process, but it totally makes sense if you agree with two basic principles:

- Learning is the bottleneck

- A single tool won't be able to tell the whole story.

If learning is the bottleneck then we should focus on how learning works. And learning works better in immersive sessions where we collaboratively build a model picking information from different sources into a progressively more consistent whole.

During these sessions we should challenge our understanding from different perspectives. Something can sound completely plausible during a conversation with our stakeholders just to leave us baffled when trying to turn this conversation into specifications or working code. So different things can happen like an EventStorming exploration, a quick whiteboard discussion in UML about a specific portion of the system, an Example-Driven discussion like "Ok, let's pick what happened last year with J.B. that bought a ticket, then left his company and asked if he could still join the training class", and see if our understanding falls apart.

Prototyping is modelling

Writing code - possibly quickly, maybe in a sandbox environment - can be a great way to check if our assumptions are sound. At the end of the day, source code is the ultimate model, and gives great feedback too.

How to pick our process

The whirlpool idea is a colourful way to say: "there is no process, only feedback loops" which translates in "find what works for you, picking the combination of tools with the highest return of investment in your scenario" and this includes you, personally, your team, and your organisation.

I can be more precise with a couple of examples.

High-Speed single focus

The way I like to work is to use Big Picture EventStorming to make sure that I am modelling the most important portion of the system, and then drill into a solution in no prisoners mode. The organisation just told us that the problem we're working on is top priority, so we want to find the best solution wasting no time.

In this scenario I need zero or little documentation upfront: I just explored the whole flow (ideally yesterday), the understanding is vivid and I just need to focus on the solution. BTW the experts are in the room, so every bit of needed knowledge is in reach.

- Software Design Event Storming including some UI Wireframes is telling me a lot of information about Events, Boundaries, Policies, Commands, and Read Models ...and more if you had a good session.

- We can capture the main scenario quickly with BDD tests in the

Given ... When ... Thenformat, and challenge them with borderline examples. - We can start prototyping to see if we missed something. Often in TDD on the single building block.

The whole cycle is short (2-3 days, some teams are even faster), and can trigger feedback that challenges the assumptions, or put us in a place where "Hey! It wasn't that hard after all!"

In this scenario there is almost no intermediate documentation - well, there's usually a 8 metres EventStorming artefact hanging on the wall for the length of the iteration - and we tend to go to the code as quickly as possible, because we want that code to be in production as quickly as possible, since we are modelling the most important portion of the system, where time is (a lot of) money.

No trade offs on quality (TDD and friends) but no sloppy bureaucratic processes.

Another implicit requirement is "The ones who write the code are part of the modelling session"

There are no handoffs. Handoffs are wrong, for a ton of reasons, but here just being unnecessary waste between the understanding of the problem and the implementation of a badly needed solution is enough.

What about the documentation?

Well... it depends! What is this documentation for?

- Before the fact documentation can be redundant if we shorten up the time before the understanding and the coding. We'll need more permanent documentation if we're exploring today and coding a couple of months later (but in this case you may have a serious process flaw that you want to solve instead of institutionalising the patch).

- After the fact documentation is a different matter. You should document as mush as needed in your specific context, potentially looking to users that are not around today. Some industries have very strict compliance requirements for very good reasons (you don't want to have a nuclear reactor shutdown procedure pointing to some sketchnotes). In these situations documentation is a vital part of your development artefact and should be treated accordingly.

Unsurprisingly, Domain-Driven Design as a community provided a few answers to this problem. Living Documentation from Cyrille Martraire is the book on the topic.

What about my team?

For your team... the choice is up to you! Find the combination of tools that gives you and your teammates the most feedback. Fine tune your tools - is wireframe good enough? or do we need to show colourful prototypes in order to get the right feedback? - according to what works for the people in your organisation and feel free to drop tools and practices that don't bring any advantage.

Empty rituals and ceremonies are boring, and boredom kills learning.

Sometimes, properly handled mistakes are the fastest way to learn(if you're managing your risk correctly).

The bottleneck is learning and learning is subjective. Find the combination of tools where you learn the most.

Be ready to change tools and practices during the lifetime of the project. What helped a lot at the beginning, maybe isn't helping that much once a core set of practices is established. Be ready for twists in the opposite direction too: some apparently innocent maintenance evolutions can require a deeper thought, maybe involving another round of collaborative modelling for envisioning the opportunities.

What about my agile process prescription?

Well agile is meant to improve effectiveness of your team, not to slow you down. However some agile implementations may turn into cages for high-value learning-bound projects.

Iterations are a way to sample project state at given intervals - and this is a good thing - but they're also used to measure velocity, and this can be trickier.

If velocity becomes too important, possibly for progress predictability, then cadence and repeatability become critical, to ensure that velocity is readable. Unfortunately, cadence and repeatability are also the key ingredient of boredom, which is the well known arch-enemy of learning.

Interestingly, low WiP Kanban implementations fit very well with the Whirlpool. Low WiP also means less topics going on in parallel, so higher team focus in the right direction. I don't care for predictability, as long as I am breaking progress in learning.

Breakthroughs will destroy predictability in the right direction. ;-)

In general, in high-value projects you should be ready to play with a different set of rules:

- playing with more alternatives before choosing,

- breaking the cadence deliberately when massive learning is necessary,

- lower the priority of progress tracking in exploratory moments (but be ready to put it under control when needed).

Putting it all together

If learning is the bottleneck, then most of the structure that comes with traditional software development processes can turn into an impediment. Sometimes you'll want to break the rules to maximise the learning instead of the predictable delivery of code that could miss the point.

Having a suite of tools really helps. Every tool will help you to a given extent, but no single tool will give you all the answers.

Weird as it sounds, boredom and impatience can be your best friends here. If a practice is not giving you insights, maybe it's time to switch to a different one.

Learn with Alberto Brandolini

Alberto is the trainer of the EventStorming Master Class (in-person), EventStorming Remote Experience Workshop and DDD Executive View Training (remote).

Check out the full list of our upcoming training courses: Avanscoperta Workshops.